Artificial intelligence has been framed by the Trump administration as ushering in a “new golden age of human flourishing, economic competitiveness and national security” for the U.S. should it win the race for computing systems that perform tasks normally requiring human intelligence.

But with the country’s drive to build up its AI ecosystem comes increased risks, both digital and physical.

Faruk Dziho, the business intelligence analyst and data solutions lead for the Texas Reliability Entity, says that while AI can strengthen grid reliability, it also creates risks if it is not managed properly.

“At the end of the day, it’s just a tool, and it’s not a replacement for engineers or operators. It excels at pattern recognition and forecasting when there is clean data,” he said during a Talk with Texas RE webinar March 10. “When it comes to some risk-mitigation strategies, there needs to be a robust data governance in building high-quality data pipelines with clear ownership, extensive validation model transparency and oversight.”

Texas RE added AI integration as a “moderate” risk in its 2024 Reliability Performance and Regional Risk Assessment, released in June 2025, saying the risks are “currently relatively unlikely to manifest themselves.”

“As AI increases in scale and integration, however, associated risks may increase in both likelihood and impact,” the regional entity wrote.

“That assessment itself is not set in stone. As adoption expands across the industry, both the probability and severity of those risks may rise,” Dziho said. “We’ll continue to monitor developments and adjust the assessments as the technology evolves.”

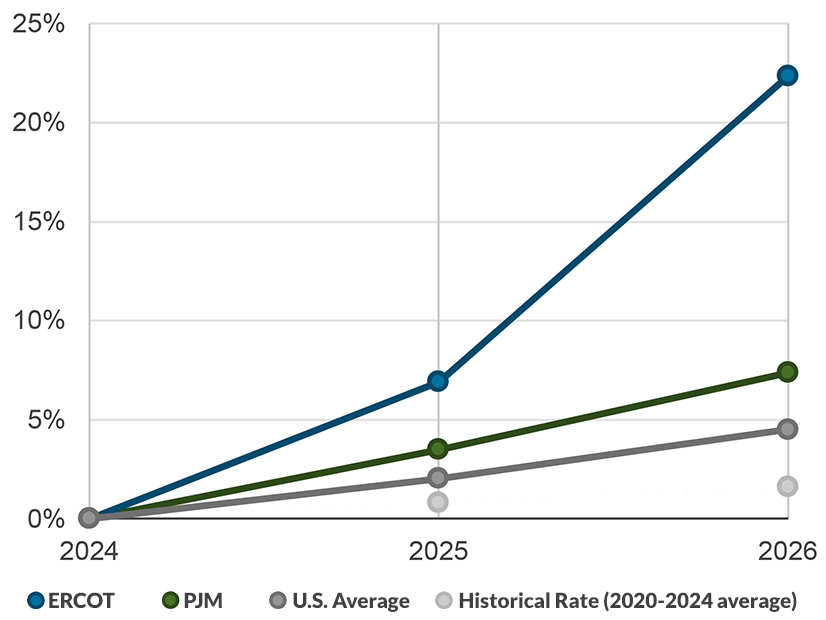

The Texas Interconnection grew faster than any other region in 2025, with demand increasing 5% through September when compared to the same period in 2024, according to the U.S. Energy Information Administration. The agency has said it expects demand to increase by more than 9% in 2026.

Dziho told his online audience that AI carries risks that demand robust mitigation strategies. AI-driven load growth could lead to cybersecurity vulnerabilities that can be exploited faster with AI-powered tools and systems, adaptive malicious code that bypasses security controls, and data poisoning.

“Artificial intelligence basically makes it easier for attackers to generate phishing attacks by generating thousands of personalized emails instantly or scanning for vulnerabilities faster,” he said.

Because AI systems use massive amounts of data collection and typically include confidential and/or sensitive information, data privacy controls must be effective in reducing the risk of breaches, Dziho said.

“We need to secure artificial intelligence workflows,” he said. “The field is changing constantly. There are new threats that we find out daily.”