By Peter Kelly-Detwiler

Leading data companies met with the Trump administration recently and signed a Ratepayer Protection Pledge, in which “companies agree to protect American consumers from price hikes due to data center energy and infrastructure requirements, and lower electricity costs for consumers in the long term.”

The White House website declared that “President Trump is calling on the leading United States hyperscalers and AI companies to build, bring, or buy all of the energy needed for building and operating data centers, paying the full cost of their energy and infrastructure, no matter what” to protect other ratepayers.

The five elements of the non-binding pledge committed the companies to:

-

- Pay the full cost of associated supply resources.

- Pay for all new required power delivery infrastructure upgrades.

- Pay for power assets and supply infrastructure whether or not they consume power.

- Invest in the local communities hosting data centers.

- Coordinate with grid operators to contribute to a more reliable grid and, when possible, deploy backup generation to shore up the grid during scarcities.

That Horse Got Out of the Barn Some Time Ago

The meeting and associated pledge were perhaps good theater but would have been more valuable a year or two ago. That’s when interconnection requests already were flooding the energy landscape and utilities were negotiating the first tariffs addressing large loads. AEP Ohio started that parade in May 2024, when it requested the Public Utilities Commission of Ohio to bless a proposal that would “enhance financial obligations that data centers must undertake to support infrastructure that serves them.”

Perhaps unsurprisingly, the data center crowd strongly opposed the proposed tariff approach, with data companies and some generators offering a counterproposal that largely was rejected. Ultimately, a tariff was approved that required new large data loads to pay for 85% of forecast capacity in a take-or-pay approach meant to cover the cost of associated infrastructure.

The tariff included a four-year ramp construction period and eight years of full service, as well as exit fees and a requirement for proof of financial viability. The data centers ultimately acceded to the terms, and as of February, AEP reported 5,642 MW of contracts had been signed under the Data Center Tariff.

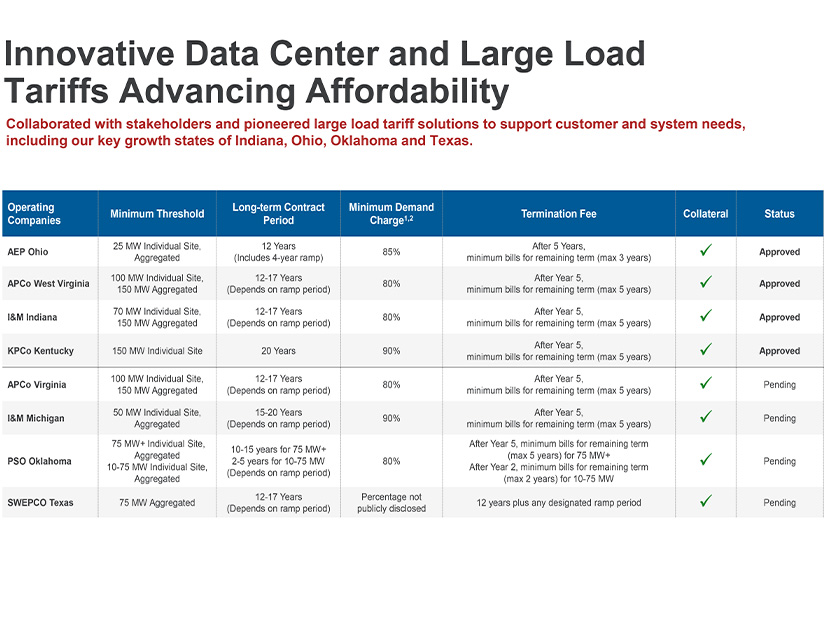

Once that regulatory model was approved, utilities elsewhere recognized they had leverage. The construct spread to other markets and later contracts showed even more teeth. AEP, for example, recently highlighted approved tariffs in four of its U.S. service territories, with some including 20-year contract periods and take-or-pay provisions as high as 90% of total demand. It has another four such tariffs awaiting regulatory approval.

Other utilities pushed similar approaches. For example, Michigan’s DTE instituted a 19-year contract for a 1,383-MW data center that includes an 80% take-or-pay provision and a termination period of up to 10 years. Consumers in Michigan received approval in December for a large load tariff that includes a minimum length of 15 years and a take-or-pay provision at 80% of forecasted demand.

Evergy and Ameren in Kansas recently received approval for a 17-year large load contract with collateral requirements, 80% take-or-pay requirements, and exit fees meant “to ensure large load customers contribute their fair share of costs and minimize risk to other customers.”

The White House pledge requiring coordination with grid operators also is being independently addressed in multiple jurisdictions, including Texas, with Senate Bill 6 including a so-called “kill switch” to ensure ERCOT can cut data loads during grid emergencies.

Meanwhile, PJM is exploring a parallel capacity auction (with administration support), while the PJM Board has proposed a series of decisions related to connecting new data center load. Utilities, state regulators and grid operators across the country are keenly focused on these issues. Pledge or no pledge, they eventually will get these issues sorted out because they must.

Too Much Demand Chasing Too Few Goods

That fact does not mean ratepayers are not at risk from this massive wave of data center development. There’s the simple inflationary dynamic resulting from demand for infrastructure (and related labor) outstripping supply.

Even non-grid-connected data centers will affect ratepayers, just indirectly. One cannot have tens of GWs of new demand for turbines, transformers, switchgear and natural gas mushroom up out of nowhere and expect prices to remain flat. They won’t, so it’s best to forget that fiction.

Time Frame Mismatches, Consolidation, and Technological Risks

There are other risks as well, including the stranded asset risk. Even if data companies pay their way for the length of an initial contract, cost recovery for many utility assets exceeds 30 years, so there is considerable risk down the road in the out-years if long-term demand does not stabilize or grow.

More immediately, there also is the risk that business models will change, or that the industry will see considerable consolidation among all of the players fighting for supremacy in the large language model (LLM) AI landscape. Each LLM developer is playing to win in a very high-stakes game, and not all will likely succeed.

Bankruptcies or buyouts could lead to billions of dollars in stranded assets. If the “dark fiber debacle” of the 1990s — where tens of billions of dollars were spent on an overbuild of the fiber optic network that took more than a decade to eventually grow into — taught us anything, then we should know to be cautious.

We also saw a similar dynamic play out in the search engine space over two decades ago, with few winners and numerous losers. And if you are skeptical of that assertion, you would do well to go Ask Jeeves.

There’s also the uncertainty of a constantly evolving technology. Chips continue to change, cooling systems evolve and approaches change. AI has only been around since ChatGPT 3.5 popped out of the box in November 2022, kicking off an AI arms race that has accelerated so quickly nobody knows where it’s going.

Leading chipmaker Nvidia’s GTC conference in March is an indicator of just how fast this is evolving. CEO Jensen Huang’s keynote indicated the company’s hardware and software are being developed specifically with energy efficiency in mind. Depending on the task, new hardware could yield efficiency improvements of between 2x and 35x per watt. Advanced software further accelerates some of these gains.

The approach to developing the AI models also may be changing. In this country, the approach to training LLMs that “learn to think” like humans typically involves throwing a combination of chips, raw data and massive amounts of power into the mix in a “brute force scaling” approach. But that ultimately may not prove to be the best avenue in the future.

Other approaches may be worthy of consideration, as DeepSeek (out of China) demonstrated, relying on better algorithms to achieve its outcomes. Baidu’s ERNIE X1 (also China) built further on this approach to reasoning and claims to offer similar performance at a more attractive cost.

U.S. labs appear to be adapting to take advantage of some of the lessons learned, adopting open-source algorithms used originally by Chinese competitors (though brute force still matters today — especially for processing huge amounts of video and data).

Dark Horses

Then there are other uncertainties that may become either historical footnotes or significantly change the industry’s trajectory. To take one example, Yann LeCun, the high-profile AI researcher who once was Meta’s chief AI scientist, recently raised $1.03 billion for his Advanced Machine Intelligence Labs.

The company is predicated on a bet that LLMs ultimately won’t prove to be the winning approach to creating intelligent systems. Rather than relying on text-based models to achieve human-type reasoning, LeCun’s team will develop models trained in visual and spatial data. If they are proven right, a lot of capital may have been allocated in the wrong direction, with investments in supporting energy systems at risk.

There is also the emerging use of photonics — the use of light, rather than electrons, to process and transmit data. Utilizing light dramatically reduces the heat produced by chips because one no longer is driving electrons through tiny copper wires or silicon transistors.

Photonics can eliminate the need to constantly turn transistors on and off, an action which requires energy with each cycle. Finally, photonics can beat today’s approach of using copper wire to send signals, one at a time. Using light, one can send multiple signals using the various inherent wavelengths and reduce the energy required.

Photonics is not fully developed or commercialized yet, but leading chipmaker Nvidia already is using “co-packaged optics,” in which optical transceivers are located next to electronic switching chips in AI data centers. This use of light cuts networking power consumption by up to 5x.

There is a great deal of optimism around the technology, with significant investment pouring into the field. In July 2025, German researchers demonstrated the world’s first photonic processor for high-performance and AI computing. It’s too early to tell whether the technology will hit the mainstream anytime soon. The point here is simply that computing technology — aided by advances made within AI itself — will continue to evolve.

A Pledge Offers Only Limited Protection

Looking across the landscape at this very early stage of a rapidly developing AI industry, uncertainty exists on multiple levels. As a consequence, White House pledges and data company commitments to protect other ratepayers may be of some limited benefit. But they protect us rate-paying pawns only for the first few limited moves in a vast multitrillion-dollar global game of high-stakes chess whose outcome is uncertain, bringing both potential promise and peril to the grid of tomorrow.