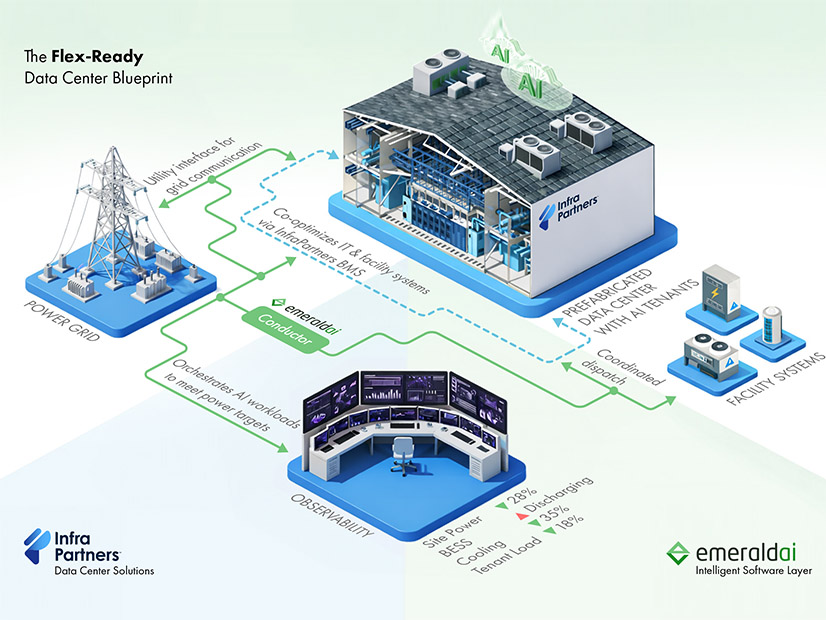

Digital infrastructure firm InfraPartners and Emerald AI announced a new partnership to construct data centers designed to be flexible grid assets.

The Flex-Ready Data Centers combine Emerald’s energy management software solutions with InfraPartners’ off-site manufacturing approach to constructing and upgrading data centers, the companies announced March 10.

“The innovation here is to put together the data center design with the needs of the software from the beginning, so that the data center is delivered as a flex-ready data center, so there is no retrofitting later,” Emerald’s chief scientist, Ayse Coskun, said. “There are no additional components needed later.”

The software needs telemetry from all aspects of data centers, which includes computing, cooling, any behind-the-meter generation or storage, and other uses of electricity at the facility, she said.

The main attraction for data centers to become flexible grid assets is speed-to-power, but flexibility offers clear benefits to the operation of the grid, InfraPartners Chief Technology Officer Harqs Singh said.

The Electric Power Research Institute has “a data center flex program with all the utilities in it, and so they’re very interested in being able to have data centers become assets, rather than just consumers,” Singh said. (See EPRI Launches DCFlex Initiative to Help Integrate Data Centers on the Grid.)

With Emerald’s management services, data centers can respond to energy availability, match up with intermittent renewables or just respond to prices, Coskun said.

“So, this interface enables not just speed to power, but more broadly a more amicable relationship between the large data center loads and the grid,” she added.

Compared to “traditional” data centers — those used for cloud computing and data storage — AI data centers have a very high “power density,” which is why they have made headlines about massive loads ranging from the hundreds of megawatts to gigawatts.

“The power density of a rack — a cabinet of servers — is increasing like 10 times compared to a typical cloud rack,” Coskun said. “The AI data centers are running a mix of training, inference and other AI loads, and there are differences. For instance, training loads tend to be more spiky, changing the power up and down more rapidly compared to cloud loads.”

Cloud computing data centers must respond to consumer requests, such as when someone accesses a database or streams video, while AI data centers have more batch processing, long-running training and heavily use their computer hardware, she added. Using energy management techniques can help smooth out their highly variable demand.

“I consider this a welcome side effect of controlling power that the spikes are reduced,” Coskun said. “Because essentially, it’s not only necessarily just reducing the power during a high demand time, but also you can set up overall power limits to gently curb the power without adversely impacting performance, at least beyond the performance constraints, and then reduce these spikes.”

The grid does not respond well to major, fast fluctuations in demand or supply, so flexibility can make AI data centers much easier to handle on the bulk power system, she said. Energy management can also smooth out ramps from spiking energy during training and as they are responding to signals from the grid itself.

“In our work so far with power grid operators and utilities, we received both requests — ‘can you reduce the power over a gradual window of 10 to 15, minutes? We don’t want to see this sharp drop,’” Coskun said, and “‘can you respond within seconds in an emergency, if needed?’ And we demonstrated both. So, there’s flexibility on how quickly we can tune power as needed, depending on the needs of the grid.”

InfraPartners can build it all from the start with its approach of building data center infrastructure at a central manufacturing site and then deploying it where needed, Singh said. That can help with initial construction, but also as new chips constantly improve and existing chips wear out and need to be replaced.

“We are going to have to be a lot more agile,” Singh said. “We’re going to have to adapt a lot more.”

The biggest constraint the industry faces now is power supply, and one way of handling that will be to install more efficient chips as they become available, he said.

“That means that the data center needs to evolve to deploy the latest chips all the time,” Singh said, “and being really good grid partners, working with the grid, showcasing to them how are the loads changing. How do we manage our assets on the data center side with grid assets, such that we’re good partners and be able to power the performance improvements that are coming? … It’s what we call ‘the upgradeable data center’: having a data center that upgrades with different chip technologies that are coming.”

A lot of the contracts for chips last about five years, but how often the chips are going to be swapped out is somewhat uncertain at this point, he added.