As the astonishing and unanticipated tsunami of AI-driven data centers washes across the electric utility landscape, the conversation continues to evolve — especially as it relates to the rest of us who share that power grid. It may soon be that data centers will rule the electric world, while the rest of us only live in it. And a proposed new metric — the “compute heat rate” — soon may make that abundantly clear.

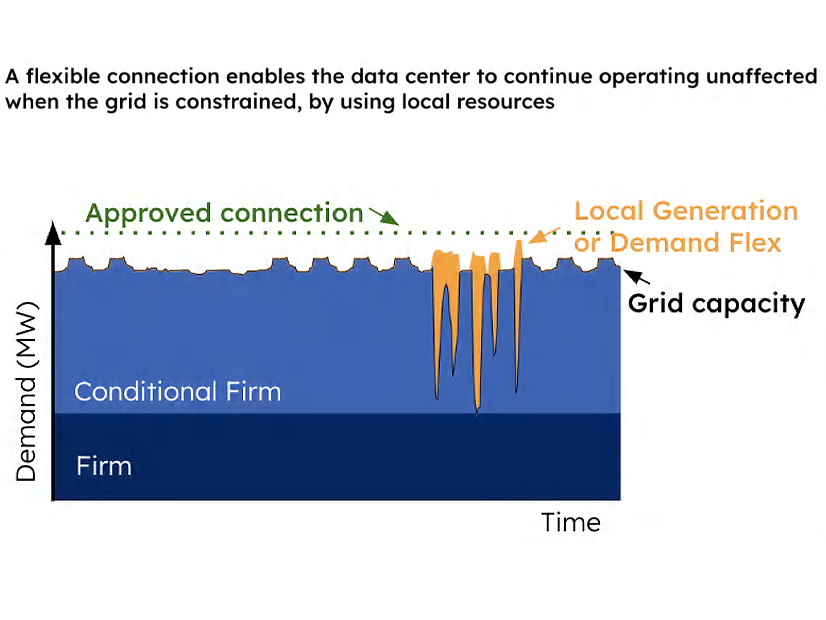

There has been a great deal of discussion related to data center loads and their impact on other ratepayers, with a recent and growing focus on the flexibility of operations. The argument for flexibility is that data centers wishing to interconnect to a capacity-constrained grid could reduce additional grid stress if they were able to interact more flexibly with it.

In theory, this could be done in two ways — through the addition of energy storage or through flexible compute operations. A recent paper on flexible operation of data centers notes that most grid scarcity events are relatively short, so adopting such approaches could significantly reduce grid stresses.

To that end, leading chipmaker NVIDIA and software company Emerald AI are pushing an initiative to “power and advance a new class of AI factories” that could connect to the grid faster and “support the grid” through flexible operation.

NVIDIA says it will employ a new reference design to help modulate demand and coordinate flexible load, while Emerald AI’s software platform will orchestrate the required computational flexibility. With this power-flexible approach, NVIDIA claims up to 100 GW of capacity could be freed up in the U.S. grid.

Flexible Operation Might Just be the Cost of Admission

A 2025 study from Duke University’s Nicholas Institute generally supports that 100-GW figure, calculating that with an average annual load curtailment rate of 0.5%, 98 GW of new load could be added to the grid. A 2026 follow-on study stated that flexible operation can feasibly reduce system costs by tens of billions of dollars over the next decade, lowering electricity prices for all participants, by flowing more megawatt-hours across the system without putting additional pressure on system peaks.

To date, there is limited evidence that flexible operation is possible. In July 2025, as part of the Electric Power Research Institute’s (EPRI) DCFlex program, an Emerald AI-guided data center cut power use by 25% during three hours of peak grid demand while maintaining AI compute quality. As of late March, Emerald AI claimed to have proven similar flexible operations at five commercial data centers. It’s also collaborating with PJM, Digital Realty, NVIDIA and EPRI on a flexibility initiative in NVIDIA’s 96-MW Aurora data center in Virginia, expected to be online by mid-2026.

During the recent CERAWeek, EPRI unveiled Flex MOSAIC — a common framework shared by 30 institutions for classifying large load flexibility to help develop shared expectations across the industry as to how flexibility will be used.

The approach would focus on performance characteristics, such as notification time, duration, frequency of use, depth of load adjustment, ramp behavior and availability — in other words, pretty much what professionals in the demand response world have been asking from enrolled DR assets for the past two decades. Put another way, the animal must perform pretty much the same old trick, but it matters more when it’s done by an elephant than a mouse.

It makes perfect sense for a data center to adopt this approach, especially if that’s the price they must pay for being invited to the grid. That invitation is pretty valuable these days, with “speed to power” being the critical imperative.

Astrid Atkinson, the CEO of Camus Energy — a company devoted to promoting flexible grid interconnections — recently said that “our number for the opportunity cost or corresponding value of getting a data center online a year earlier is about $7 billion for a gigawatt of capacity per year.” (Yes, you read that correctly: $7 billion in value for the ability to access power 365 days earlier — a little over $19 million/day.)

Just Don’t Expect Data Loads to Curtail Voluntarily

In theory, some of these new AI-focused data centers can operate at least part of their load flexibly. However, we don’t know what this means for the massive 100-plus-MW data centers that host large language training models, versus those smaller data centers serving AI inference loads that put those models to work in the real world.

There is reason to suspect, though, that their willingness to operate flexibly within the power grid might not be as high as one might hope, and they may have to be contractually bound to do so.

That’s because once they are running and performing their alchemy — employing silicon to weave data and electricity into valuable information — these AI factories have extremely high opportunity costs. The value of their output may in fact be so high that they are willing to shut down only at astronomical prices.

This concept of AI data center price elasticity is relatively new. We generally know what it is for price-sensitive cryptocurrency miners. They are estimated by the Energy Information Administration to curtail electricity use at a price around $100/MWh, depending on the prevailing price for the currency. But that price sensitivity has only recently begun to be explored for other data loads.

These Aggregated Loads are Too Big to Ignore

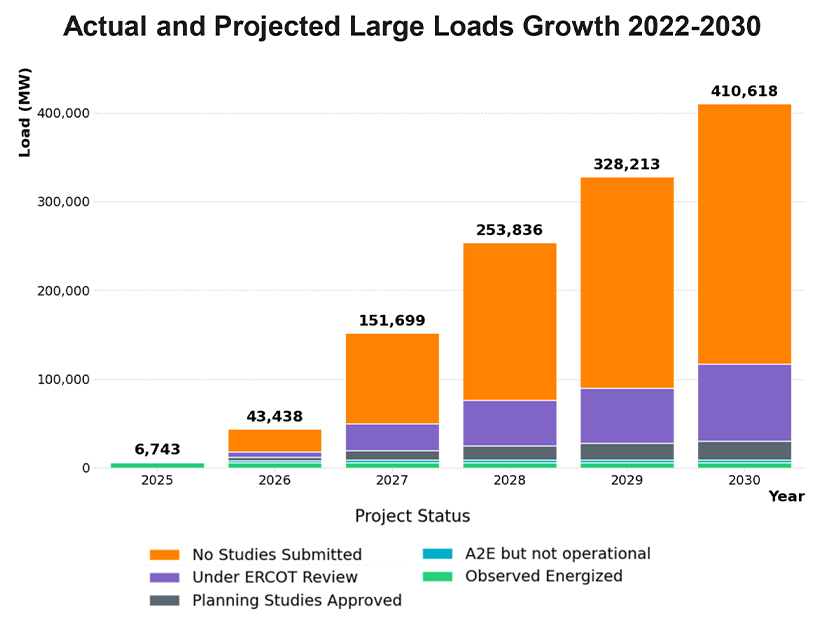

If these loads were insignificant, such price elasticities might not matter much. But since those loads are enormous and coming quickly, they do matter — a lot. As of April 1, for example, ERCOT is “tracking approximately 410 GW of large loads seeking interconnection, of which about 87% are data centers.” To put that in perspective, ERCOT’s record peak demand to date was 85,500 MW.

We’ve already seen the destabilizing impacts of forecast data loads in PJM, where the past three capacity markets have been completely upended by the addition of forecast data loads. An estimated $23 billion of additional capacity revenues are estimated to come from existing and projected data loads. That’s capacity, where suppliers play in the market.

But what about energy markets, where buyers step in? How much are AI data centers willing to pay for power before they shut down? And what impact might that have on everybody else in the market?

A New Metric Defining Opportunity Costs and Willingness to Pay for Power

Some fascinating work is being done in this area by Hans Royal, an industry veteran who recently published a paper introducing the concept of a compute heat rate (CHR). The CHR is a metric that attempts to quantify “the maximum electricity price a data center operator can rationally pay before the computation running on that electricity becomes uneconomic.” It’s a way to measure price sensitivities.

For decades, the energy industry has been using the concept of a heat rate as a measure of a power plant’s thermal efficiency in converting a fuel’s heat potential into electricity. The heat rate is defined as the Btu in a fuel required to generate 1 kWh of electric energy.

Heat rates are commonly employed, with traders and plant operators using them to calculate the “spark spread” — the estimated profitability of a plant based on the prevailing prices of gas and electricity. The lower the heat rate, the more efficient the power plant is.

Royal notes that in addition to crypto miners, other traditional large loads — such as aluminum smelters, steel producers and chemical manufacturers — also exhibit price sensitivities. Their economics won’t justify higher prices, and depending on the industry they typically shut down at “relatively low price thresholds” that he quantifies as between $40 and $120/MWh, in effect creating a “demand-side brake” that works as a self-correcting price mechanism.

However, that doesn’t remotely apply to data centers. If accelerating 1 GW of data center capacity for one year is worth $7 billion, then clearly AI data centers, and their related energy economics, are like nothing the industry has ever seen.

Calculating the CHR

The CHR is aimed at illuminating AI data center price elasticities to gain a better sense as to how such loads could affect power markets. It’s calculated as follows: CHRw = (Rw – Cnon-elec) / (1 + m)

Rw is defined as the gross revenue per megawatt-hour of electricity consumed by workload type w. The value is “derived from API pricing, cloud compute rates or enterprise contract values.” It’s then converted to per-megawatt-hour revenue using GPU power consumption and throughput data. So, the more valuable the computing task, the greater the willingness to pay a higher price. In other words, the load becomes less elastic.

Cnon-elec are the operating costs per megawatt-hour not specifically related to power, including amortization of GPUs, facility overhead, cooling infrastructure, networking and maintenance. These come from published infrastructure cost data and industry benchmarks.

m is the required return margin, representing the minimum required profit margin. Here, the baseline assumption is 0.30 (30%).

The astonishing finding from Royal’s analysis is that “the blended CHR of approximately $6,350/MWh implies that AI data center demand will not curtail at electricity prices below roughly 127 times the current wholesale average.”

Royal notes that different AI workloads may have vastly different price tolerances. His initial estimates have them ranging from about $500/MWh for training of a frontier large language training model to over $53,000/MWh for frontier inference services.

Remember, this would apply to tens of gigawatts of future loads that are just now being forecast and built. He concludes that with their high opportunity costs, these massive loads “will not curtail at any price level observed in U.S. wholesale markets” (my emphasis).

Once Certain Penetration Levels are Achieved, Watch out

These price tolerances are not an issue when supply is unconstrained, but as the demand-supply imbalance increases, the CHR dynamic increasingly applies, especially in localized and transmission-constrained areas. Royal postulates that these kinds of extreme prices could be seen once critical penetration thresholds are reached, in much the same way the California energy market shifted with the duck curve. Once sufficient solar was added, price formation was structurally affected.

With data load, he suggests, the CHR effect would emerge “suddenly once sufficient data center infrastructure reaches critical mass at specific grid nodes.”

That finding has significant implications for grid operators, utility planners and regulators, as well as other electricity consumers who may find themselves priced out in various markets. The CHR metric may require additional vetting — something that is beyond my limited background and ability. But the concept fascinates me, and it’s worth further exploration, as this rapid and massive new AI demand is something we simply have never seen before.

This metric may offer great value in tracking costs by location and over time as the use of AI accelerates and the technology continues to evolve. It might even offer future economic utility value as a hedging instrument. Only time will tell.