Not long ago, the electric grid ran on a shared set of facts: weather data, flood maps and long-term climate projections from federal agencies. While imperfect, the data was broadly accessible and widely understood. Utilities, RTOs, regulators and developers might interpret the data differently, but they were at least starting from the same baseline.

At the very moment grid operators are being asked to plan for unprecedented complexity — explosive load growth from data centers, electrification of buildings and transport, and a rising cadence of climate-driven extreme events — the public data infrastructure that underpins those decisions is becoming less reliable, less complete and in some cases less available.

If this had happened a decade or two ago, it could have blinded the whole industry. Fortunately, a rapidly expanding ecosystem of private data platforms, proprietary climate models and AI-driven simulation tools is ready, willing and more than eager to fill that gap. And if government data integrity is threatened, the future of grid planning increasingly will be built not on shared public datasets but on licensed, and probably opaque, models.

This is a shift in governance as much as it is a shift in technology. And for a system as interconnected and reliability sensitive as the power grid, it raises a question: What happens when the “ground truth” of grid planning no longer is public?

Public Data Infrastructure is Eroding

Years ago, I toured the National Center for Atmospheric Research (NCAR), a brutalist I.M. Pei structure in Boulder, Colo., and an architecture-and-climate-science nerd’s dream day trip. On display was the room-sized Cray-1, the first supercomputer, highlighting the data-intensive nature of weather prediction.

Funded by the National Science Foundation, NCAR was one of the leading scientific organizations creating the analytical methodologies to track the changing planet. It, along with agencies like NOAA, FEMA, USGS and EPA, has provided the baseline datasets that inform many aspects of the grid. These datasets no longer are produced by Cray-1 (the phone in your pocket is now orders of magnitude faster), and they are not perfect — but they are standardized and transparent.

Budget uncertainty, shifting political priorities and institutional constraints have all contributed to growing concern about the durability of federal climate and environmental data programs. In one of many moves to cut government research groups, the administration announced in December 2025 it would dismantle NCAR, citing it as a source of climate alarmism. While the nonprofit that manages NCAR is challenging the action, the U.S. electric system has to prepare for a day when it cannot rely on federal weather data for grid operations.

Even where data remains available, update cycles are slowing, and agencies struggle to keep pace with rapidly changing conditions. FEMA flood maps, for example, lag actual risk, while wildfire and heat risk datasets often are fragmented across agencies and jurisdictions.

For grid operators, this matters in very practical ways. Transmission planning needs consistent weather baselines, resource adequacy assessments require shared assumptions about temperature extremes and demand patterns, and emergency planning should draw from common risk maps.

The grid has always relied on a shared view of reality. As those baselines degrade — or diverge — the system risks losing coherence.

The Rise of Private Climate and Infrastructure Intelligence

Into this gap has stepped a new class of private-sector players, offering not just data but fully integrated predictive intelligence.

One example is NVIDIA’s Earth-2 platform, a high-resolution digital twin of the entire planet. Using AI, Earth-2 aims to simulate weather and climate with a level of granularity far beyond that of traditional models. The promise is transformative: hyper-local forecasts of extreme weather, infrastructure-level risk assessments and scenario modeling that could reshape how utilities plan and operate.

Felek Abbas, senior vice president, chief technology and security officer at SPP, told RTO Insider that SPP is looking at the potential the Earth-2 platform offers.

SPP expects the improved detail and an additional week of detailed forecasting will help it in the day-ahead markets and improve its outage planning.

“If you’re expecting the load to be at a manageable place, you’re able to take an outage, but if you’re expecting the load to be really high, that’s not a time that you can afford to have an outage,” Abbas said. “More accuracy in that space means more reliability, and that’s what we’re after.”

Starting Broad or Narrow

While NVIDIA attempts to model the entire planet for any purpose — a one-planet-fits-all approach — another way to approach grid-meets-weather questions is to start with a specific industry query: How do we make the power system operate most efficiently? From there, we gather data sources, build models and test against decades of load, power pricing and weather data to develop a solution tailored for a complex industry’s needs.

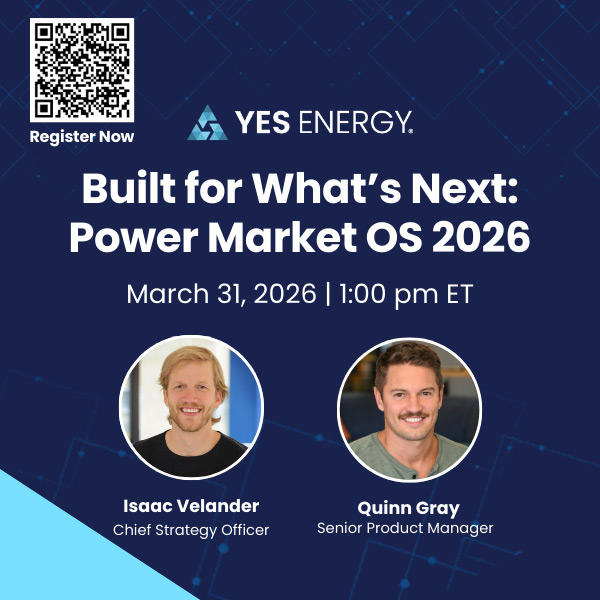

Yes Energy is a prime example of this (and as of 2025, it happens to be RTO Insider’s parent company, so it clearly has great taste in industry intelligence). It has released an all-new module in its Power Signals product for “deeper forecast analysis” that isolates different weather effects to understand how they impacted historical load. In addition, it provides a view of the probable distribution of future demand based on observed weather outcomes up to a year ahead.

Models, Models Everywhere

Other industries have spawned additional weather intelligence companies with some applicability to the grid. Insurance companies and asset owners in particular seek digital crystal balls to assess the risks they face.

Organizations like First Street Foundation are redefining how climate risk is measured and monetized. Its extreme weather, wildfire and macroeconomic models translate complex environmental risks into address-level scores. For example, PVcase has integrated First Street data into its platform rather than relying on outdated FEMA flood maps, helping renewable energy developers and operators better understand flood risks.

Google DeepMind has both WeatherNext and Weather Lab. Jupiter Intelligence provides asset-level climate risk analytics for utilities and infrastructure investors, ClimateAi offers predictive climate insights tailored to operational decision-making, and Descartes Labs leverages geospatial intelligence to model environmental and economic systems at scale.

In a recent op-ed in Nature, two Oxford academics warned that rigorous standards need to be applied before trusting AI weather models. “Before weather agencies adopt AI models, the predictive skill of such models on a range of hazardous events — from heatwaves and heavy rainfall to major storms — must pass a defined minimum standard.” They proposed a protocol for training future AI systems that reserves a designated set of “iconic” extreme events solely for testing.

With or without those guardrails, we are seeing a shift from public data interpreted by utilities and regulators to private models that generate proprietary outputs embedded directly into planning and operational systems.

Implications for Utilities, RTOs and Grid Operators

For grid operators, this shift may become a source of friction and risk.

First, planning fragmentation will increase as utilities, developers and system operators rely on different datasets and models. One entity may base its load forecast on a proprietary climate-adjusted model; another may rely on historical NOAA data; and yet another may incorporate vendor-specific DER adoption projections.

The result could be a gradual erosion of alignment. Incompatible assumptions may underpin transmission plans, and divergent expectations of future conditions may emerge in interconnection studies. Regional coordination will be more difficult when participants no longer work from the same baseline.

Second, grid planning may become a “black box.” Many of the new models entering the space are proprietary and continuously evolving. Their assumptions are not fully transparent, their methodologies are not easily replicable, and their outputs may change as algorithms are updated.

For regulators and stakeholders, this creates a challenge: How do you evaluate a transmission investment justified by a model you cannot fully interrogate? How do you compare competing proposals built on different proprietary datasets?

We may move from engineering-led planning to model-led planning, with privately owned models.

Third, cost and access asymmetries are emerging. Large investor-owned utilities and well-funded developers can afford to license advanced datasets and modeling tools. Smaller utilities, municipal systems and co-ops often cannot. This creates the risk of a two-tier system of grid intelligence, where some actors operate with far more sophisticated — and expensive — insights than others.

Finally, operational dependencies are deepening.

Real-time grid operations increasingly rely on high-quality forecasts: weather-driven load, renewable generation output, wildfire risk and outage probabilities. These inputs are now being integrated into outage management systems, DERMS platforms and advanced forecasting tools — many of which depend on third-party data providers.

That creates a new form of vendor lock-in. If critical operational decisions depend on proprietary data streams, switching providers or validating outputs becomes significantly more difficult.

Regulatory and Market Implications

Regulators need to ask a seemingly simple question: Who validates the data?

If a utility files a transmission plan based on outputs from a proprietary climate model, what standard should regulators apply? Transparency? Historical accuracy? Peer review? And how should regulators enforce those standards when intellectual property protects the underlying models?

There also is a market power dimension.

Control over datasets increasingly means control over forecasts, risk perception and, ultimately, investment decisions. In that sense, private data providers may occupy a role analogous to credit rating agencies in financial markets: entities whose assessments shape outcomes but are not always fully visible or accountable.

For the grid, the stakes are particularly high because reliability depends on coordination, and coordination depends on shared assumptions. If different actors are operating on different versions of reality, the risk of misalignment — and failure — increases.

Managing the Public Grid with Private Data

I’m not arguing that private innovation is a problem. Far from it. The advances being driven by companies such as Yes Energy, NVIDIA, First Street and others are essential for managing a more complex, climate-exposed grid.

In a perfect world, society would treat public data infrastructure as critical infrastructure. However, the current administration has shown it will not consistently fund, maintain and update federal datasets at a pace that reflects their importance to national energy systems. And if it perceives climate data as a political tool rather than a neutral truth, it is unlikely to continue improving the open, standardized baselines that the industry has relied on until now.

Given the irreversible move from public to private data, transparency requirements need to evolve.

If proprietary models are used in regulated planning processes, the assumptions, validation methodologies and sensitivity analyses should be disclosed. Regulators do not need to see every line of code, but they do need confidence in the outputs.

Hybrid approaches should be explored. Public-private partnerships may combine the strengths of open data and private innovation, creating shared validation frameworks that preserve comparability while enabling advancement.

Data itself should be recognized as a core component of grid infrastructure. We regulate power plants and transmission lines. We set standards for reliability and interconnection. But we do not yet have a coherent framework for governing the data that determines where those assets are built and how they are operated.

The electric grid is becoming more digital, more dynamic and more exposed to climate risk. The question no longer is whether we have enough data to run the grid; it is whether the data we rely on is shared, trusted and governed in the public interest.

Reliability is not just a function of power plants and wires; it is a function of whether everyone is working from the same map.

Power Play columnist Dej Knuckey is a climate and energy writer with decades of industry experience.